AI Agents Explained: 5 Stages of Growth

AI agents are not just better chatbots. Use this five-stage model to understand output, conversation, tool use, autonomous agency, and multi-agent systems.

AI agents are not just better chatbots. Use this five-stage model to understand output, conversation, tool use, autonomous agency, and multi-agent systems.

The short version: AI agents are not just "better chatbots." They are the next step in a growth pattern: output, conversation, tool use, autonomous planning, and multi-agent collaboration. Once you see those five stages, the AI market stops looking like random product launches and starts looking like a species learning how to interact with the world.

Everyone says AI progress is "a model race." Actually, the better lens is developmental: AI is moving through the same five stages a human child moves through, cry, speak, hands, independence, society. Once you see the stages, the headlines stop feeling random.

If you already see the five-stage shape and want the implications, skip ahead to stage 5 for the multi-agent layer. If "AI is just text in, text out" is still your mental model, read on.

Most people explain AI progress as a model race.

GPT gets better. Claude gets better. Gemini gets better. Context windows grow. Benchmarks move. Prices fall. New demos arrive every week.

That view is useful, but it misses the deeper pattern.

The real story is not only that models became smarter. The real story is that AI changed how it interacts with the world.

That is the lens I use now:

Intelligence is not just knowledge. Intelligence is an interaction pattern.

A system that can only emit text is one kind of intelligence. A system that can hold context is another. A system that can call tools is another. A system that can plan, inspect, act, and repair its own work is another. A system that can coordinate with other specialized agents is another again.

That sequence matters because it gives you a practical map.

When a new AI product launches, the useful question is not "Is this impressive?" The useful question is:

Which interaction stage is this product actually in?

Here is why this matters: the five stages are predictive. A new release that improves stage three (tool use) means different things from a release that improves stage four (autonomy). Vocabulary turns AI news from noise into signal.

Here is the whole map before we go deeper.

| Stage | Human analogy | AI capability | Practical example | What changed |

|---|---|---|---|---|

| 1 | Crying | One-way output | Early ChatGPT-style prompting | AI can respond |

| 2 | Speaking | Contextual conversation | GPT-4-class dialogue | AI can follow a thread |

| 3 | Hands | Tool use | Function calling, MCP tools | AI can act through systems |

| 4 | Independence | Goal-directed agency | Coding agents, research agents | AI can plan and self-correct |

| 5 | Society | Multi-agent collaboration | Agent teams, A2A-style systems | AI can divide work |

This is not a perfect biology analogy. AI is not a human child, and models do not "grow up" in the way people do.

But as an operator mental model, the analogy is powerful because every stage upgrades the same thing: the channel between intelligence and reality.

Stage 1 is output.

Stage 2 is dialogue.

Stage 3 is action.

Stage 4 is judgment.

Stage 5 is coordination.

If you remember only one line, remember this:

The agent era begins when AI stops merely answering and starts changing the state of a system.

The first mass-market AI moment was not agency. It was output.

You typed a prompt. The model produced text. The text was often useful, sometimes wrong, frequently surprising, and occasionally brilliant.

That was enough to change the internet.

But it was not yet an agent.

In this stage, the interaction pattern is simple:

That last line is the key. The model does not act. It does not inspect your files. It does not call your calendar. It does not verify the result. It does not decide that its answer was not good enough and run another step.

It emits.

That sounds small now, but it was the necessary first stage. Before AI could become a collaborator, the world had to see that language models could produce outputs that felt useful at human scale.

Stage 1 created the demand shock.

People did not adopt ChatGPT because it had a perfect theory of the world. They adopted it because it could draft, summarize, explain, brainstorm, and rewrite fast enough to become part of daily work.

That changed expectations.

Once people saw AI produce useful language, they immediately wanted the next thing:

"Can it remember what we are talking about?"

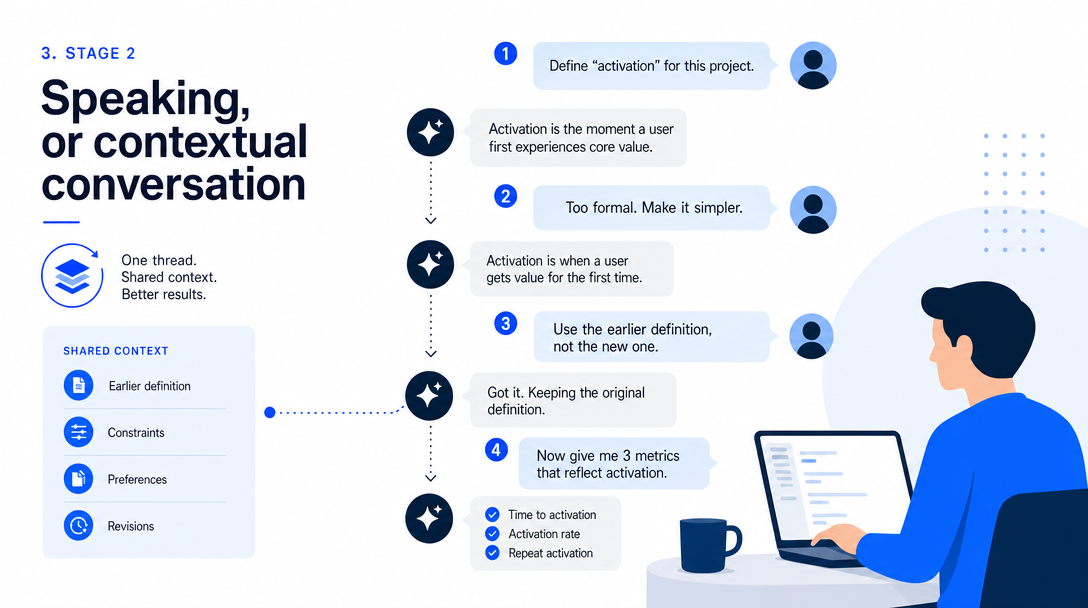

The second stage is conversation.

Not just a single answer. A thread.

The jump from one-shot output to contextual dialogue is bigger than it looks because work rarely happens in one prompt. Real work has revisions, constraints, contradictions, examples, preferences, and accumulated context.

When a model can hold the thread, the relationship changes.

You can say:

"No, that is too formal."

"Keep the structure, but make the intro sharper."

"Use the earlier definition, not the new one."

"Compare this against the previous version."

That is not just text generation. That is shared state.

Shared state is the beginning of collaboration.

In human terms, a toddler does not become useful because they know more words. They become easier to work with because they can connect one sentence to the next. The same pattern shows up in AI products. The more context a system can carry, the more it can participate in an ongoing task instead of answering isolated questions.

This is why context windows, memory, files, and project-level context matter so much. They are not feature polish. They are the foundation that turns an answer machine into a working partner.

But conversation still has a ceiling.

Even the best conversational model is trapped if it cannot touch anything.

It can tell you what command to run. You run it.

It can draft the email. You send it.

It can outline the database migration. You apply it.

At Stage 2, the model can think with you, but it still needs your hands.

Tool use is the stage many people underestimate.

It sounds like an implementation detail:

"The model can call a function."

"The assistant can use tools."

"The app supports MCP."

But this is the point where AI crosses from language into work.

OpenAI describes function calling as a way to connect models to external tools and systems. That simple idea changes the interaction pattern: the model can choose a structured action, the software can execute it, and the result can come back into the model's context.

MCP pushes the same direction from another angle. The official MCP docs describe it as an open standard for connecting AI applications to external systems such as local files, databases, tools, and workflows.

This is the "hands" stage.

Before hands, AI lives in text.

After hands, AI can change state.

It can search.

It can read files.

It can query a database.

It can call an API.

It can run a command.

It can update a ticket.

It can create a draft in another system.

That is why tool calling is not just a developer feature. It is the bridge between model intelligence and operational reality.

Here is the practical distinction:

| Capability | Chatbot stage | Tool-use stage |

|---|---|---|

| Answer a question | Yes | Yes |

| Fetch fresh data | Only if pasted in | Yes |

| Use private files | Only if uploaded | Yes, through controlled access |

| Execute an action | No | Yes |

| Verify through a tool | No | Yes |

| Build a workflow loop | Manual | Possible |

Tool use also introduces a new kind of risk.

When AI could only write text, a bad answer was mostly an information problem. When AI can call tools, a bad decision can become a system problem.

That is why permissions, logs, approval gates, and sandboxing become important as soon as you enter Stage 3.

Hands are powerful because they can build.

Hands are dangerous because they can break.

Before stage four, AI executed your asks. After, AI proposes courses of action and waits for your sign-off. The shift is small in code and large in posture; the agent becomes a partner instead of a typewriter.

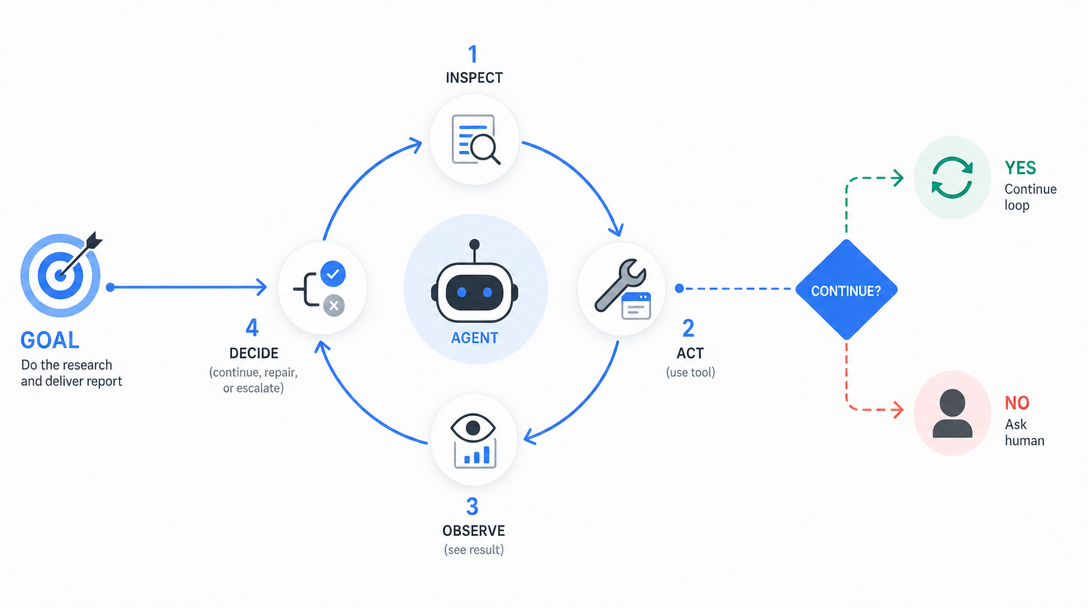

An AI agent is not just a chatbot with tools.

A useful agent has a goal, a working context, available tools, a way to choose steps, and a way to evaluate whether the work is good enough.

IBM defines an AI agent as a system that autonomously performs tasks by designing workflows with available tools. Google Cloud similarly emphasizes autonomy, task automation, tool use, and the ability to interact with the world.

That is the line I care about:

An agent designs part of the path.

A normal automation workflow follows a route you already designed.

An agent receives a target and figures out some of the route itself.

That difference changes how it feels to use the system.

With a workflow, you think in nodes.

With an agent, you think in delegation.

You can ask a coding agent to inspect a repository, identify where a bug likely lives, propose a patch, edit files, run tests, read the failures, and adjust. You can ask a research agent to gather sources, filter weak evidence, build a brief, and flag uncertainty. You can ask an operations agent to watch a queue, triage exceptions, and escalate only the cases it cannot resolve.

The important part is not that the agent succeeds every time.

It does not.

The important part is that the agent participates in the loop:

That loop is what separates Stage 4 from Stage 3.

Stage 3 gives AI hands.

Stage 4 gives AI a work cycle.

But this is also where beginner expectations break.

An agent is not magic autonomy. It is bounded autonomy.

If you give it a vague goal, unlimited tools, no tests, no permission model, and no review process, you are not building an agentic workflow. You are creating a failure amplifier.

The mature version of Stage 4 looks less like "AI does everything" and more like this:

| Agent responsibility | Human responsibility |

|---|---|

| Explore the working context | Define the goal |

| Propose a plan | Set constraints |

| Execute reversible steps | Approve risky actions |

| Run checks | Judge business correctness |

| Report uncertainty | Decide tradeoffs |

That is why the best agents often feel like very fast junior collaborators. They can do real work, but the quality of the system depends on how clearly you define the operating boundary.

The most common failure mode in AI predictions is mapping new capabilities to old categories. Early agent demos got dismissed as fancy chatbots because the chatbot rubric was the only one in scope. New stage, new rubric.

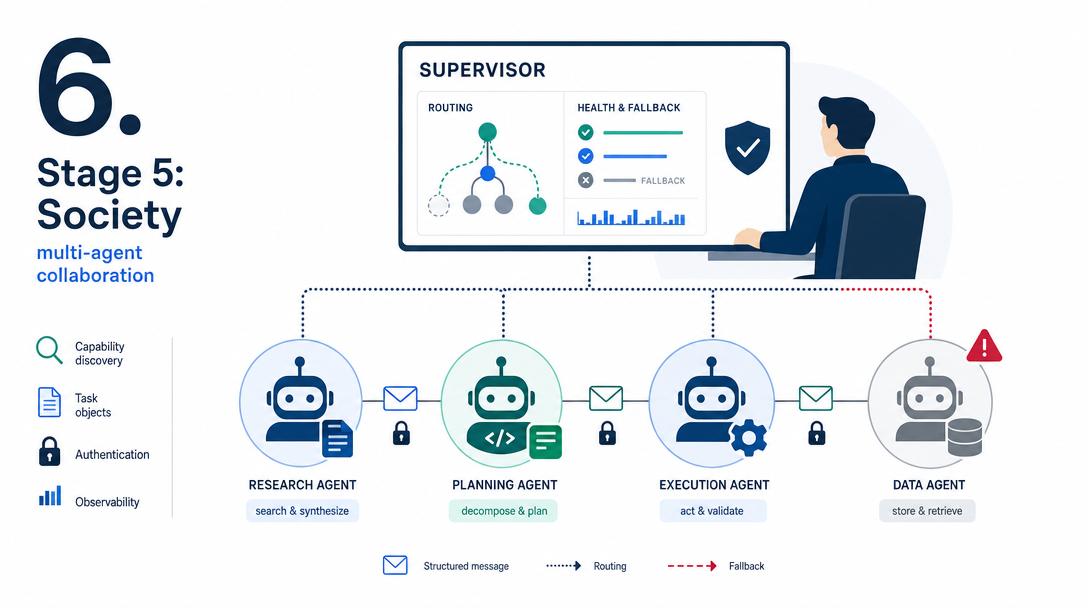

The fifth stage is not a bigger single agent.

It is multiple specialized agents working together.

This matters because individual intelligence scales poorly. One person can be brilliant and still have only one attention stream. The same is true for agents. A single agent can plan, call tools, and iterate, but it becomes overloaded when the task requires many domains at once.

Human civilization did not scale because every individual became a genius. It scaled because people divided labor.

One person farms. One person builds tools. One person records transactions. One person teaches. One person enforces rules. The system becomes more capable than any individual.

Multi-agent systems follow the same logic.

Google's Agent2Agent protocol announcement frames the problem directly: agents need to collaborate across siloed systems and applications, even when built by different vendors or frameworks. A2A focuses on agent interoperability, capability discovery, task management, collaboration, and secure communication.

That is the beginning of AI society.

Not because the agents are conscious.

Not because they are people.

But because the coordination pattern becomes social:

| Social function | Agent-system equivalent |

|---|---|

| Find the right specialist | Capability discovery |

| Agree on the task | Task object and status |

| Exchange work artifacts | Messages and structured outputs |

| Maintain trust | Authentication, permissions, logs |

| Divide labor | Specialized agents |

| Repair failure | Monitoring and fallback agents |

This is where the market will get noisy.

Many "multi-agent" demos are just multiple prompts in a trench coat. A real multi-agent system needs more than role names. It needs state, routing, permissions, observability, fallback behavior, and a reason why multiple agents are better than one.

The diagnostic question is simple:

Does each agent own a real responsibility, or is the system just pretending to have a team?

If the answer is the second one, you do not have AI society. You have a diagram.

The five stages are not random.

You cannot safely coordinate agents that cannot act.

You cannot build reliable tool use without context.

You cannot delegate goals to a system that can only emit one-shot text.

The growth order is:

That order gives you a product filter.

When a vendor says "agent," ask:

| Question | What it reveals |

|---|---|

| Can it preserve context across the task? | Stage 2 maturity |

| Can it call tools safely? | Stage 3 maturity |

| Can it inspect results and self-correct? | Stage 4 maturity |

| Can it ask for approval at risk points? | Operational maturity |

| Can it coordinate with other agents? | Stage 5 maturity |

| Can I see logs and permissions? | Production maturity |

Most products that call themselves agents are somewhere between Stage 2 and Stage 4.

That is not a criticism. It is useful to know.

If you expect Stage 5 behavior from a Stage 3 tool, you will be disappointed. If you treat a Stage 4 agent like a harmless chatbot, you will create risk.

The map protects you from both mistakes.

As of 2026, the market is moving from tool use into agency and early multi-agent infrastructure.

You can see the shift in several places:

The exact winners will change.

The pattern will not.

The future of AI will not be one giant chatbot sitting above every workflow. It will be a layered system: models, tools, memory, permissions, agents, protocols, marketplaces, and monitoring.

That is why the next useful skill is not memorizing every product name.

The next useful skill is knowing how to place a product on the interaction ladder.

Two predictions I am willing to make from inside the five-stage map this year.

Prediction 1: stage three matures into reliable tool chains. Today, multi-step tool use is impressive when it works and disappointing when it does not. By the end of 2026, the failure modes will be predictable enough to plan around, which means real workflows can depend on agent tool chains, not just hope to.

Prediction 2: stage four shifts from demo to product. Long-horizon autonomous agents (a week, a project, a campaign) are still rough today. By 2027, the autonomy class of products will exist as ordinary tools, not as research previews.

Save this article. Check the predictions in twelve months. The corrections are at least as informative as the hits.

Three things this article does not try to do, so you know what to read elsewhere for them.

Implementation details. This article gives you the shape, not the code. If you want to build a stage-three agent, the map points the direction; the actual builder needs an agent runtime tutorial, not a thinking framework.

Hard timelines. The four-million-vs-four-years comparison is suggestive, not predictive. Silicon evolution is happening faster than carbon, but the exact cadence of stage-five emergence is unknown. Treat the timing as a useful prior, not a calendar entry.

Ethical assessment. This article is descriptive, not normative. The map describes what is happening; it does not argue what should happen. Both kinds of conversation are important; this article is the descriptive one.

Reading the map is the start. Three habits make it actually useful in your week.

Habit 1: stage-tag the news. Every time you read an AI announcement, pause for ten seconds and ask which stage this advances. The habit takes about two weeks to feel automatic and dramatically improves your prediction sense.

Habit 2: stage-tag your own asks. Watch your prompts for one day. Most beginners ask at stage one even when their tool supports stage three or four. Naming the stage is half the fix.

Habit 3: stage-tag the gap. When something feels off about an AI tool, the gap is usually between the stage you expected and the stage the tool actually supports. Naming the gap turns frustration into a clear next step, find a tool at the right stage, or lower the expectation by one stage.

If you are learning AI, do not try to jump straight into "multi-agent systems."

Follow the same growth path.

| Your learning stage | Practice |

|---|---|

| Output | Ask clear questions and compare answers |

| Context | Work on one real project across multiple turns |

| Tools | Let AI use files, search, commands, or APIs in a controlled environment |

| Agency | Delegate a small goal with tests and review |

| Society | Split a workflow into specialist roles only when one agent becomes a bottleneck |

This is the operator path.

It is also why I tell beginners to start with asking better before they chase complex automation. If you cannot define the goal clearly, more agents will not help. They will just produce more noise.

Read AI Agents for Beginners: Ask Better if you need the first step. Read AI Stack Explained if the product layers still feel blurry. Then come back to this five-stage map and use it as your filter.

If you want the next layer after this map — what an agent actually needs to live in production (memory, workspace, channels, security, scheduling) — the OpenClaw deep series walks through each piece in order.

Take the last AI question you asked any tool. Find it in your history if you can; otherwise reconstruct from memory.

Step 1: tag the stage of the ask. Were you asking for output (stage one), context-aware understanding (stage two), action (stage three), judgment (stage four), or coordination across agents (stage five)?

Step 2: tag the stage the tool actually serves at that moment. A model in chat-only mode is stage two. A model with tool use enabled is stage three. An agent runtime is stage four. A multi-agent system is stage five.

Step 3: see if the stages match. A stage-three ask served by a stage-two tool will produce a polite refusal or a generic answer. A stage-two ask served by a stage-four tool will use too much horsepower for the question.

Most early AI frustration comes from stage mismatch. The exercise above takes ninety seconds and turns invisible mismatch into a fixable signal.

Three simplifications I make to keep the map teachable, with the caveats so you know what you are accepting.

Simplification 1: stages are presented as discrete. In reality they overlap and feed each other. A model can be doing stage one (generating output) and stage three (calling a tool) in the same turn. The discrete view is a teaching scaffold.

Simplification 2: progress is presented as forward-only. In reality, model upgrades sometimes regress on earlier stages while advancing on later ones. The forward-only view is mostly true, with quiet exceptions worth knowing about.

Simplification 3: the map ignores costs. A stage-four agent costs more per task than a stage-two model, often by an order of magnitude. The map says nothing about whether you should pay that cost; that depends on the task. Use the map to choose the level; use a separate calculation to choose the budget.

Three concrete moves from this map this week, ranked by ROI.

Move 1: tag your tools. Open your AI tool list and write the stage next to each. The mismatch between "what stage I treat this as" and "what stage it actually supports" is where most week-one friction lives.

Move 2: tag your asks for one day. Notice how many of your prompts land at stage one even when the tool can do stage three or stage four. The reframe is half the fix; the rewrite of one or two prompts is the other half.

Move 3: pick one stage to practice. If your asks are mostly stage one, deliberately try three stage-three asks this week (asks that involve tool calls). The discomfort is the learning.

The most striking line in this article is the speed gap: human cognition took roughly four million years to walk the five stages, AI is at stage four in about four years.

The comparison is suggestive, not literal. Carbon evolution had constraints silicon does not have. Each generation took a generation. Mutations were random. Energy budgets were tight. Branches that hit dead ends could not be checkpointed and resumed.

Silicon has none of those bounds. Iterations happen in days, mutations are deliberate and parallel, energy comes from a wall socket, and dead-end branches just get archived for later. Each constraint that gated carbon evolution is relaxed by orders of magnitude on silicon.

The implication for stage five is honest: the social layer of AI will not require centuries to stabilize. The infrastructure (multi-agent runtimes, durable identity, communication protocols) is being built right now, in public, in real time. The compression that turned millions of years into four for stages one through four will not stop at stage five.

The most important shift in AI is not that computers became more fluent.

It is that intelligence is gaining interfaces to reality.

Text was the first interface.

Context made it collaborative.

Tools made it operational.

Agents made it goal-directed.

Multi-agent systems make it social.

That is why this moment feels so strange. We are not only watching software improve. We are watching a new work pattern form.

The people who benefit from it will not be the people who memorize the most model names.

They will be the people who understand the interaction pattern early enough to build with it.

Hand off early. Verify carefully.

— Leo

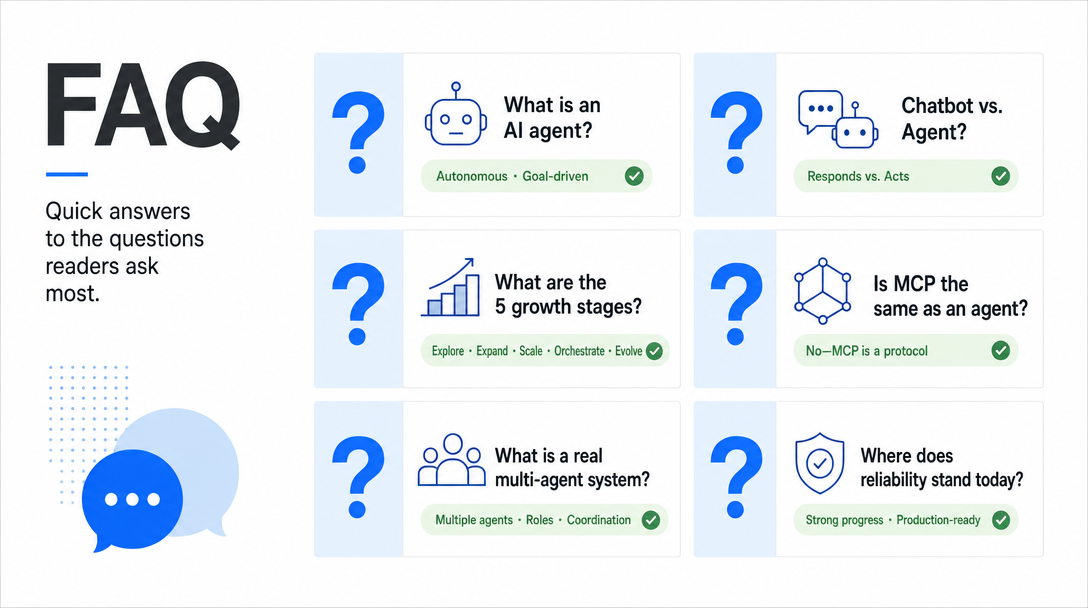

An AI agent is a system that can pursue a goal through multiple steps, usually by using tools, memory, planning, and feedback. A chatbot mainly answers. An agent can decide what step to take next.

A chatbot is mostly conversational. An AI agent can plan, call tools, inspect results, and continue working toward a goal. The difference is not the interface. The difference is the work loop behind it.

The five stages are one-way output, contextual conversation, tool use, autonomous agency, and multi-agent collaboration. Each stage upgrades how AI interacts with the world.

No. MCP is a protocol for connecting AI applications to external systems. It gives agents and AI applications a standard way to access tools, data, and workflows. An agent is the goal-directed system that may use those connections.

A multi-agent system uses multiple specialized agents that collaborate or coordinate to solve a task. A real multi-agent system needs responsibility boundaries, state, routing, permissions, monitoring, and fallback behavior.

No. Beginners should first learn prompting, context, tool use, and safe delegation. Multi-agent systems are useful only after one-agent workflows become a bottleneck.

Some are reliable for bounded tasks with strong tools, logs, tests, and human approval gates. They are not reliable when given vague goals, broad permissions, and no verification loop.

OpenAI Help Center: Function Calling in the OpenAI API

Model Context Protocol: What is MCP?

Google Developers Blog: Announcing the Agent2Agent Protocol

IBM: What are AI agents?

Google Cloud: What are AI agents?

Stanford HAI: 2026 AI Index Report

Hands-on AI coding tutorials and workflow deep-dives, straight to your inbox every week.