AI Skills That Matter: Know What You Want

The most valuable AI skills are not tool tricks. They are taste, judgment, better questions, metacognition, authenticity, and systems thinking.

The most valuable AI skills are not tool tricks. They are taste, judgment, better questions, metacognition, authenticity, and systems thinking.

The short version: The most valuable AI skills are not tool tricks. Tools change. Interfaces change. Models change. The durable skill is learning how to define outcomes, ask better questions, judge quality, and design systems where AI can do real work without replacing your taste.

Everyone says AI skills are about prompt tricks and tool stacks. Actually, the durable skill is learning how to define outcomes and judge quality. Tools change every season; outcome thinking compounds over years.

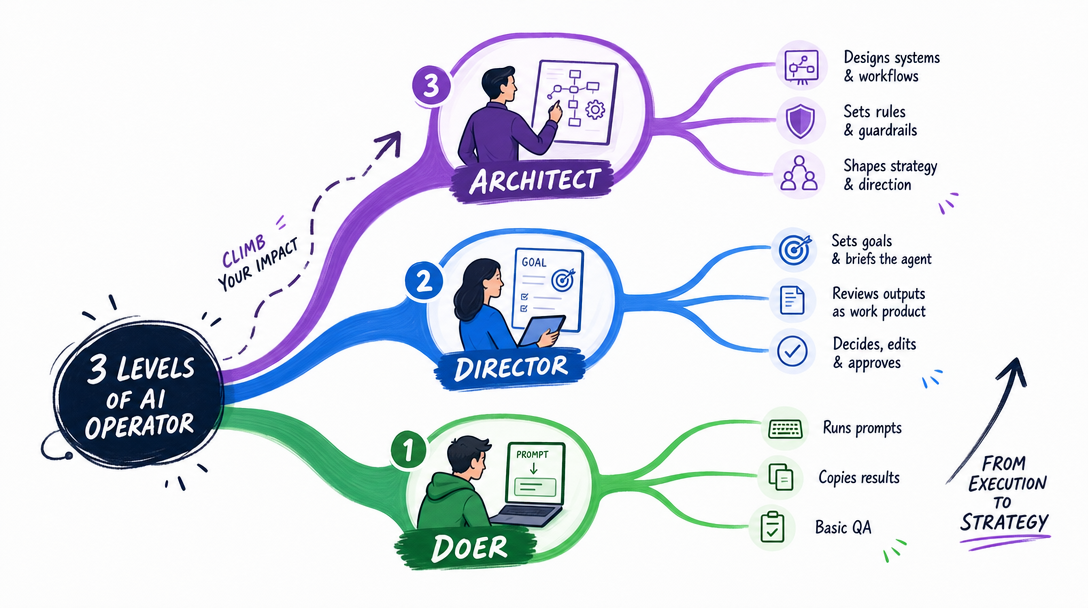

If you already operate as a director or architect, skip ahead to §6 for the five compounding skills. If you are still stuck in doer mode, read on through §3 first.

I changed tools four times.

Make. n8n. Claude Code. OpenClaw.

Each time, I thought I was upgrading software.

Looking back, the real upgrade was not the tool. It was the way I thought.

In the old mode, my first question was:

What do I know how to do?

In the new mode, my first question is:

What do I want to exist?

That sounds small. It is not.

It is the difference between being trapped inside your current skills and using AI to reach beyond them.

This article is not another list of AI tools. It is about the AI skills that matter when execution becomes cheaper: taste, judgment, better questions, metacognition, authenticity, and systems thinking.

In July 2024, I wanted to build an automation.

The job was simple on paper: collect industry news from RSS feeds, translate it, format it, and publish it.

I opened Make and started dragging modules.

RSS module. Translation module. Formatting module. Publishing step. API settings. Test run. Error. Fix. Another test. Another error.

It took two days.

I was proud of it because it worked.

Fast forward to March 2026. I needed almost the same thing again.

This time, Make did not even enter my mind.

I opened Claude Code and described the outcome:

Build a workflow that collects technology news from these RSS sources,

translates it into my writing style,

and prepares it for publication.

Five minutes later, I had a better starting point than the thing that took me two days before.

The point is not that "AI is fast."

The point is that my first thought had changed.

Old thought:

I know Make, so I will solve this with Make.

New thought:

I want a working news-to-publication workflow.

The old question starts with your current ability.

The new question starts with the desired result.

That is the real shift.

Before AI-native thinking, you measured productivity by hours worked. After, you measure by outcomes shipped per week. The clock-time view loses; the outcome view wins under AI.

Here is the shift in one table.

| Dimension | Old thinking | AI-native thinking |

|---|---|---|

| Starting point | What skill do I have? | What result do I want? |

| Bottleneck | My current ability | My ability to define the outcome |

| Learning mode | Learn the tool first | Describe the task first |

| Core value | Execution | Judgment |

| New task response | Study before doing | Specify, delegate, verify |

| Failure cost | High, because you rebuild by hand | Lower, because you can regenerate and iterate |

| One-person capacity | One person doing tasks | One person directing workflows |

This does not mean technical skill is useless.

The opposite is true.

People who understand the work can describe better requirements. If you know how websites work, you can ask Claude Code for a better website. If you understand workflows, you can design better automations. If you understand writing, you can reject generic drafts faster.

But "knowing how it works" is not the same as "doing every step yourself."

That is where many people get stuck.

They think the value is in pushing every button.

Increasingly, the value is in knowing which buttons should exist.

Here is why this matters: most "AI is not useful" complaints come from doer-mode users asking AI to do small tasks. The same model becomes powerful the moment a director-mode user asks it to design something.

I think of the AI learning curve in three levels.

Most people are still at Level 1.

| Level | Role | Core action | Typical failure |

|---|---|---|---|

| 1 | Doer | You do the work; AI helps a little | You use AI like a faster search box |

| 2 | Director | You describe the outcome; AI executes | You give vague instructions and blame the model |

| 3 | Architect | You design the system; AI runs repeatable loops | You automate before you know what to judge |

At Level 1, AI is an assistant for small pieces.

You ask for a summary. You rewrite a paragraph. You translate a sentence. You check a syntax error.

This is useful. It is also limited.

The doer still carries the whole workflow in their own hands. AI only helps around the edges.

This is why some people use AI for months and still say, "It is not that impressive."

They are using a new tool with an old mental model.

They bought a car and used it as a chair.

At Level 2, your job changes.

You stop asking AI to help with fragments and start describing outcomes.

Bad request:

Write an article.

Better request:

Write a practical article for non-technical founders.

The topic is how to use Claude Code for a weekly research workflow.

Use a direct, experience-based tone.

Include a failure example, a table, and a verification checklist.

Avoid tool hype.

The second request is longer, but length is not the point.

Structure is the point.

Good direction includes context, intent, constraints, output format, and verification.

That is why "prompt engineering" is the wrong mental model for most beginners. It makes the skill sound like magic phrasing.

The real skill is clearer delegation.

At Level 3, you are not just delegating tasks.

You are designing systems.

You ask:

This is where one person starts to feel like a small team.

Not because AI is magic.

Because repeatable work stops living only in your head.

I have used agent workflows where one agent researches, another drafts, another checks structure, and another prepares publishing assets. The human role is not to disappear. The human role is to set direction, judge quality, and decide what deserves to exist.

That is a much higher-value seat.

I hear this all the time:

I used ChatGPT for months. It is fine, but not life-changing.

I understand the feeling. I had it too.

The mistake is usually this: people use AI for low-level cognitive offloading only.

Cognitive offloading means moving mental work into an external tool.

A calculator is cognitive offloading.

GPS is cognitive offloading.

AI is cognitive offloading too, but there are two levels.

| Offloading level | Example | Result |

|---|---|---|

| Low-level offloading | Translate this paragraph | Saves effort |

| High-level offloading | Analyze this paper, find three objections, score each objection by strength | Expands thinking |

Low-level offloading saves hands.

High-level offloading expands the mind.

The difference is not the model. It is the task you give it.

When I use AI for topic selection, I do not ask:

Give me content ideas.

I ask it to gather signals, compare angles, score ideas by audience value, identify what I can uniquely say, and flag topics that are already too crowded.

AI does not make the final decision.

It improves the research surface so my decision is better.

That is the pattern.

AI should not replace judgment. It should raise the quality of what judgment sees.

The Harvard Business School and BCG field experiment on generative AI popularized two useful collaboration patterns: centaurs and cyborgs.

I use both.

| Mode | How it works | Best for | Risk |

|---|---|---|---|

| Centaur | Human and AI split tasks clearly | Repeatable work, research, publishing, reporting | Too rigid if the task is creative |

| Cyborg | Human and AI work tightly together | Coding, strategy, design, writing | Easy to lose track of who is judging quality |

Centaur mode is clean.

You decide what the human does and what the AI does.

For example:

Human: choose topic and angle.

AI: gather sources, draft outline, prepare table.

Human: judge argument and rewrite key sections.

AI: format, check links, prepare FAQ.

Cyborg mode is messier.

You and the AI shape the work together in rapid loops.

This is what happens when I code with Claude Code. I describe a feature. Claude writes a draft. I see a better direction. Claude refactors. I notice a missing edge case. Claude adds a test. The output belongs to the loop, not to either side alone.

Beginners should usually start with centaur mode.

Clear division reduces risk.

Once you understand the model's strengths and failure modes, cyborg mode becomes powerful.

If execution is getting cheaper, what becomes more valuable?

Not "knowing every tool."

Tools decay.

These five AI skills compound.

AI can generate 100 options.

Only you can decide which one is worth keeping.

This is not a soft skill. It is the central skill.

In my own content workflow, AI can produce drafts, outlines, image concepts, titles, and summaries. Most of them are not bad. They are just not right.

That distinction matters.

Bad means broken.

Not right means it misses the taste, the audience, the moment, or the point.

AI is good at producing options. Human value moves to filtering options.

The practical problem is that many beginners do not have a written standard.

They feel something is wrong, but they cannot name what is wrong.

That makes AI collaboration weak, because the model cannot improve against a standard you have not expressed.

For example, if I am judging an AI-written article, I am not asking only:

Is this good?

I am asking:

Does this article say something I would actually defend?

Does it contain a real example instead of a generic claim?

Does the reader know what to do after reading it?

Did the article add information, or only rearrange familiar advice?

Those questions turn taste into an operating system.

You can do the same with code, design, research, hiring, product work, or any other field. Taste becomes useful when it becomes inspectable.

If you want to train this skill, do not ask AI for one output.

Ask for ten.

Then reject seven in writing.

The rejection is the training.

When you can explain why an option is almost right but still wrong, your judgment is becoming sharper.

In the old internet, answers were scarce.

In the AI era, answers are cheap.

Questions become expensive.

Compare these two requests:

Analyze this market.

Compare the pricing changes of the top five Southeast Asian ecommerce SaaS products from 2024 to 2026.

Find products that raised prices without slowing user growth.

Explain what they did right.

The second request is not just more detailed.

It contains a better problem.

That is why asking is not a prompt trick. Asking is problem framing.

If you frame the wrong problem, a better model only gives you a more polished wrong answer.

I like to use a simple five-part question frame:

| Part | What it forces you to clarify | Example |

|---|---|---|

| Outcome | What should exist at the end? | A Ghost-ready article draft |

| Context | What does the model need to know? | Audience, brand, prior articles, constraints |

| Standard | What does good look like? | Useful, specific, not hype, evidence-backed |

| Boundaries | What should it avoid? | No fake stats, no generic tool list, no invented links |

| Verification | How will we know it worked? | Word count, source check, SEO fit, human review |

This frame is more important than any single prompt template.

Templates age.

The frame survives.

It also makes your requests easier to debug. If the output is bad, you can ask which part failed. Did you define the outcome poorly? Did you omit context? Did you forget the standard? Did you fail to specify verification?

Most AI failures are not mysterious.

They are underspecified work orders.

Metacognition means thinking about your thinking.

With AI, it means watching the collaboration itself.

You ask:

This is the difference between a passive user and a serious operator.

A passive user accepts the first answer.

A serious operator asks the model to reveal risks, compare alternatives, and identify weak links.

One of my most useful follow-up questions is:

What important risk did you not consider in your first answer?

It is simple. It works often enough that I use it constantly.

Another useful habit is keeping a small error log.

Not a dramatic one. Just a simple note:

| Failure | What caused it | What I will change next time |

|---|---|---|

| The AI invented a source | I asked for citations too late | Require source URLs before drafting |

| The article sounded generic | I gave topic but not point of view | Add my thesis and personal example first |

| The code ran locally but failed in production | I accepted the happy path | Ask for edge cases and run tests |

| The workflow became too complex | I automated before understanding the task | Write the manual checklist first |

This is how you stop making the same AI mistake every week.

The best AI users I know are not people who never get bad outputs.

They are people who notice patterns in bad outputs and upgrade the workflow.

That is metacognition in practice.

AI can produce polished writing.

Polished is not the same as real.

When every article can sound clean, lived experience becomes more valuable.

The parts readers remember are often not the perfect sentences. They remember the scar tissue:

AI can help you express those things.

It cannot have lived them for you.

That is why authenticity is not decoration. It is evidence.

This matters for SEO too.

Search engines and AI answer engines are not only trying to identify matching keywords. They are trying to surface pages that satisfy real intent. A page that repeats the same advice as 200 other pages is easy to replace. A page built from lived experience, specific decisions, real tradeoffs, and verifiable examples is harder to replace.

That is why I do not treat "experience" as a branding trick.

Experience changes the content.

It changes what you notice.

It changes what you warn people about.

It changes what you refuse to recommend.

For this article, the useful part is not merely "learn taste, judgment, and systems thinking." Many people can write that sentence. The useful part is the transition from Make and n8n into Claude Code and OpenClaw, because that is where the abstract idea becomes visible: my value moved from operating tools to defining systems.

If your content has no such concrete transition, it will probably sound like AI wrote it.

Even if a human did.

AI can do points.

Humans must connect points into systems.

A single prompt is a point.

A weekly research workflow is a system.

A content operation with source intake, drafting, review, image generation, publishing, and measurement is a system.

Systems thinking asks:

What repeats?

What should be standardized?

Where does human judgment enter?

What can fail silently?

What should be logged?

What should never be automated?

This is where the real gains are.

The person who can design the workflow owns the output.

The person who only knows the tool is replaceable by the next interface.

A beginner-friendly rule is this:

Do it manually once.

Write the checklist.

Then automate the checklist.

People often skip the middle step.

They jump from a messy task to automation, then wonder why the AI workflow produces messy results.

The checklist is the bridge.

It turns tacit judgment into explicit structure. It shows which inputs matter, which decisions require a human, which steps can be delegated, and which checks must happen before publishing.

For example, a content workflow might become:

| Step | Human or AI? | Why |

|---|---|---|

| Choose the thesis | Human | This requires point of view |

| Gather source material | AI first, human verifies | AI expands the surface area |

| Draft outline | AI with constraints | Structure can be proposed quickly |

| Judge information gain | Human | Value is contextual |

| Write first draft | AI plus human edits | Speed with direction |

| Fact-check claims | Human-led with tools | Trust is not delegated |

| Publish and measure | System plus human review | Feedback improves the next run |

That is human-AI collaboration as a system, not a slogan.

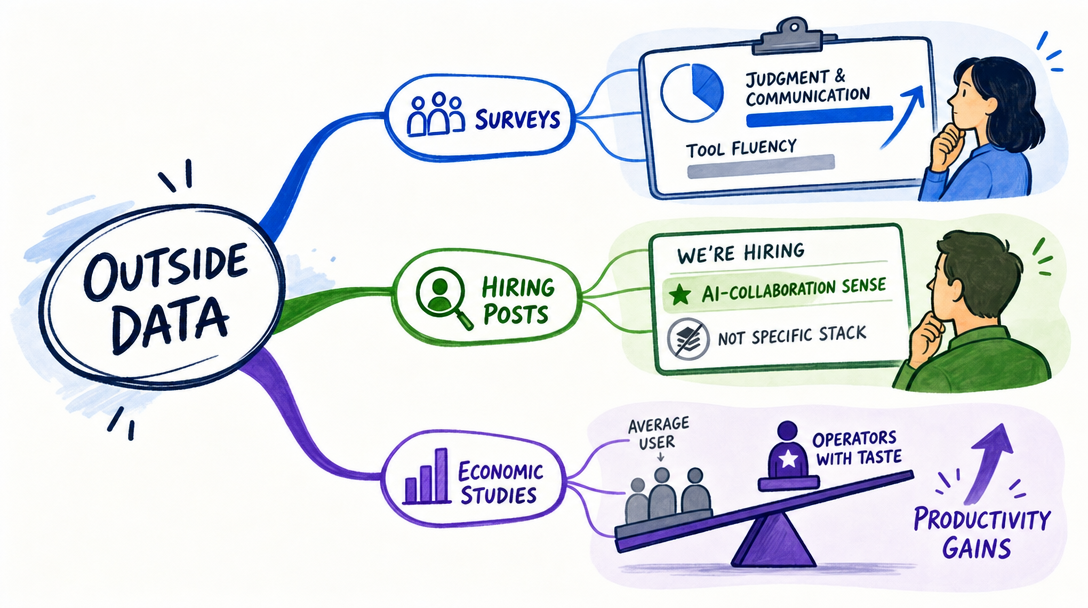

This is not only my personal experience.

The World Economic Forum's Future of Jobs Report 2025 says analytical thinking remains the most sought-after core skill among employers, with seven out of 10 companies considering it essential in 2025. The same report lists AI and big data, networks and cybersecurity, and technological literacy among the fastest-growing skills, while also pointing to creative thinking, resilience, flexibility, and lifelong learning as rising skills.

That combination matters.

The future is not "only learn AI tools."

It is "learn AI tools and keep the human skills that make the tools useful."

Microsoft's 2025 Work Trend Index points in the same direction. It describes "Frontier Firms" built around human-agent teams: AI-operated, but human-led. In that model, humans set direction, manage exceptions, and decide what the agents should optimize for.

That is very close to the three-level model in this article.

Doer.

Director.

Architect.

The names differ. The shape is the same.

The Harvard and BCG "jagged frontier" research adds one more important warning: AI does not improve all work equally. It helps strongly inside some task boundaries and can hurt performance outside them when people trust the output too much.

That is the hidden reason judgment matters.

AI skill is not only knowing when to use AI.

It is knowing when the task has crossed the frontier.

For a beginner, this means you should separate tasks into three buckets:

| Bucket | What it means | Good beginner action |

|---|---|---|

| Green zone | AI is likely to help | Use AI freely, then review |

| Yellow zone | AI can help but may miss context | Use AI for options, keep human judgment central |

| Red zone | Wrong output can create real damage | Slow down, verify, use expert review |

Writing a draft is usually green or yellow.

Inventing legal advice is red.

Summarizing a public report is usually green.

Making a business decision from an unverified summary is yellow or red.

Generating code for a personal script may be green.

Changing production infrastructure without tests is red.

This is where "AI skills" becomes a serious professional topic instead of a productivity slogan. The question is not "Can the model answer?" The question is "Can I judge the answer well enough for this use case?"

That is why the most useful AI education does not only teach prompts.

It teaches boundaries.

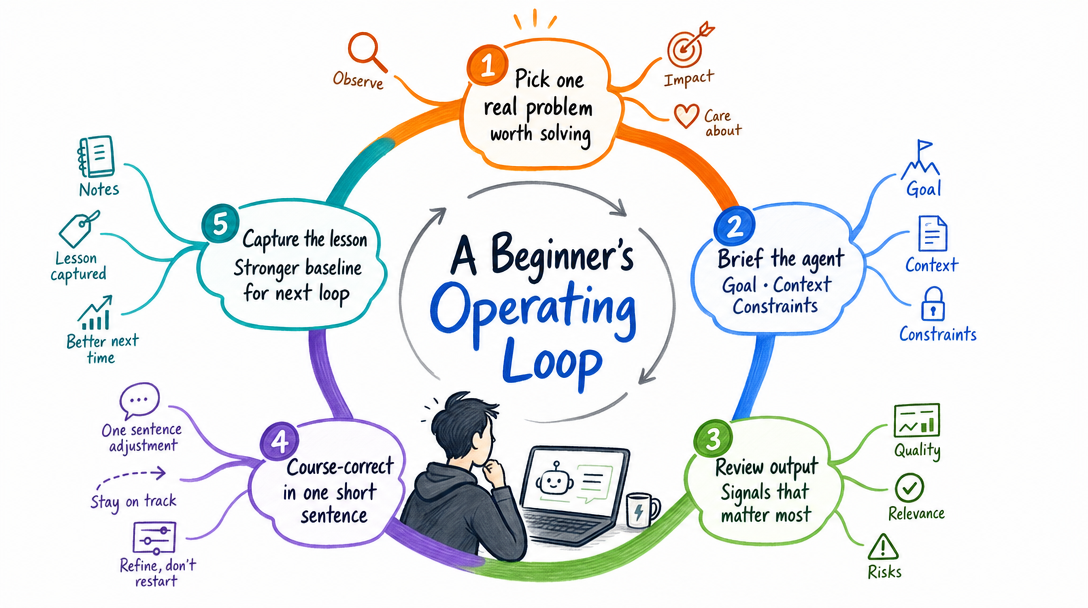

If I had to compress the whole article into one repeatable loop, it would be this:

Define -> Delegate -> Inspect -> Improve -> Systematize

Start with the desired result.

Do not begin with the tool.

Instead of "I need to learn n8n," say:

I need a repeatable workflow that turns source material into publishable research notes every Friday.

That sentence already contains more upside than a tool tutorial, because it defines the work.

Give the AI a bounded task.

The boundary matters.

Do not dump the whole ambiguous project into the model and hope it understands your life. Give it one part of the work with context, constraints, and output format.

Treat the first output as material, not truth.

Ask what is missing. Ask what is weak. Compare it with your standard. If the task contains facts, verify the facts.

Do not only fix the output.

Fix the instruction.

If the AI made a predictable mistake, your workflow should change so the mistake is less likely next time.

When the same task repeats, turn it into a checklist, saved prompt, script, template, or agent workflow.

This is the moment where AI stops being a conversation and starts becoming infrastructure.

That is the move from director to architect.

Here is the practical version.

Do not start by learning every AI tool.

Practice the skill behind the tool.

| Skill | Practice this week | Proof you improved |

|---|---|---|

| Taste and judgment | Generate 20 options, choose 3, explain why 17 failed | You can name the standard, not just the preference |

| Asking better questions | Rewrite 5 vague prompts into structured work orders | The output improves without changing tools |

| Metacognition | Ask for risks, assumptions, and missing evidence | You catch a weakness before publishing |

| Authenticity | Add one real mistake or reversal to a draft | The piece sounds less generic |

| Systems thinking | Turn one repeated task into a checklist or workflow | You can run it again next week |

This is not glamorous.

It works.

The AI era rewards people who turn vague intention into operational clarity.

One more note: do not practice these skills only on toy tasks.

Use a real task you already care about.

If you are a founder, use customer research.

If you are a creator, use content production.

If you are a developer, use a small internal tool.

If you are a manager, use weekly reporting.

AI learning becomes much faster when the task has consequences, because consequences force judgment.

If you want to practice this article instead of just reading it, do this for seven days.

| Day | Exercise | Output |

|---|---|---|

| 1 | Pick one weekly task you dislike | A one-sentence outcome |

| 2 | Describe the task as input, process, output | A workflow table |

| 3 | Ask AI to run one part of the task | A first draft or result |

| 4 | Judge the output with a written standard | A pass/fail checklist |

| 5 | Ask AI what risks or assumptions it missed | A risk list |

| 6 | Add your lived experience or judgment | A non-generic version |

| 7 | Save the reusable process | A prompt, checklist, or workflow note |

At the end, ask one question:

Did I only save time, or did I improve the way I think about the work?

Saving time is good.

Improving thinking is better.

Beginners often ask, "How do I know I am getting better at AI?"

Do not measure it by how many tools you have tried.

Measure it by the quality of your workflow.

Here are better signals:

| Signal | Weak version | Strong version |

|---|---|---|

| Prompting | You use longer prompts | You give clearer work orders |

| Output quality | You accept the first draft | You can diagnose why it is weak |

| Research | You ask for facts | You verify sources and compare evidence |

| Automation | You connect tools | You know where human judgment belongs |

| Learning | You chase new apps | You improve one repeatable workflow |

| Judgment | You say "looks good" | You can name the standard |

The strongest signal is transfer.

If you learn a pattern in writing and can apply it to research, coding, or operations, you are not just learning a tool.

You are learning an AI-era operating model.

That is what matters.

The common mistake is believing AI makes human taste less important.

It does the opposite.

When output becomes abundant, selection becomes scarce.

When execution becomes cheaper, direction becomes more valuable.

When tools become easier, taste becomes the difference.

Do not become a person who only collects tools.

Become the person who knows what should be built, why it should exist, what good looks like, and where AI should stop.

That is the AI skill stack that matters.

This article is the mindset layer.

Use it with the practical path:

| Next article | Read it when | Why it matters |

|---|---|---|

| Learn AI for Beginners: 4-Level Roadmap | You want a step-by-step learning path | It turns mindset into a roadmap |

| AI Terminology for Beginners | The terms still feel blurry | It gives you the working vocabulary |

| AI Agents for Beginners: Ask Better | You want better outputs from agents | It teaches the delegation loop |

| AI Stack Explained | You confuse model, product, agent, and workflow | It maps the stack |

The sequence is simple:

Mindset -> Vocabulary -> Asking -> Workflow -> Systems

That is how AI stops being a pile of tools.

It becomes a way of working.

The AI skills that matter most are taste and judgment, asking better questions, metacognition, authenticity, and systems thinking. Tool skills still matter, but they compound only when you know what outcome you want.

Execution still matters, but it is no longer the only bottleneck. As AI handles more routine execution, human value moves toward defining outcomes, setting constraints, judging quality, and designing workflows.

Using AI as a tool means asking for one-off help. Using AI as a system means designing repeatable workflows where agents handle defined steps, humans set direction, and verification keeps quality under control.

Centaur mode separates human and AI work into clear parts. Cyborg mode blends human and AI work more tightly, with the human and AI shaping the output together through rapid feedback loops.

Start with one weekly task. Describe the desired outcome, give context and constraints, let AI produce a first pass, then judge what is wrong, what is useful, and what should become a repeatable workflow.

The AI era does not only reward people who know more tools.

It rewards people who can define better outcomes.

It rewards people who can ask sharper questions.

It rewards people who can judge output, preserve authenticity, and design systems that make good work repeatable.

Your value does not disappear when AI gets stronger.

It moves upward.

From doing.

To directing.

To architecting.

Know what you want.

Then hand off the work.

— Leo

[1] World Economic Forum, The Future of Jobs Report 2025, accessed 2026-04-24.

[2] Microsoft WorkLab, 2025: The year the Frontier Firm is born, accessed 2026-04-24.

[3] Harvard Business School, Navigating the Jagged Technological Frontier, accessed 2026-04-24.

[4] Reddit, AI Skills to Master in 2026, accessed 2026-04-24.

Hands-on AI coding tutorials and workflow deep-dives, straight to your inbox every week.